AngularJS (1.x) was once a very popular front-end framework, and many applications built with it still run smoothly today.

As technology evolves, teams want to move to modern Angular (2+) for its TypeScript support, cleaner architecture, better tools, and long-term maintenance.

However, rewriting a large AngularJS project from scratch can be time-consuming and risky.

That’s why many developers choose to run AngularJS and Angular together in a hybrid setup — this approach saves time and costs while still ensuring an effective migration process and keeping the system running normally.

To make AngularJS and Angular work together, the Angular team released an official package called @angular/upgrade.

It acts as a bridge between the two frameworks, allowing them to share the same DOM, services, and data.

You can install it easily:

npm install @angular/upgrade @angular/upgrade-static |

With this tool, you can:

This is an official and stable migration solution, fully supported by the Angular team — not a workaround or a temporary solution.

Step 1: Bootstrap Both Frameworks

In your main entry file, initialize Angular and AngularJS to run together:

|

|

Step 2: Downgrade an Angular Component

Suppose you have an Angular component like this:

@Component({ selector: 'hello-ng', template: 'Hello from Angular' })export class HelloNgComponent {} |

You can make it available inside AngularJS templates:

|

|

Now you can use it directly in AngularJS HTML:

<hello-ng></hello-ng> |

Step 3: Upgrade an AngularJS Service

You can also reuse existing AngularJS services inside Angular:

angular.module('hybridApp').service('LegacyService', function() { this.getData = () => 'data from AngularJS';}); |

@Injectable({ providedIn: 'root' })export class LegacyAdapter { constructor(@Inject('LegacyService') private legacy: any) {} getData() { return this.legacy.getData(); }} |

Step 4: Split Routing Clearly

Keep routing for both frameworks separate to prevent conflicts:

Use ngRoute or ui-router for AngularJS pages.

Use @angular/router for Angular modules.

Define clear URL boundaries, for example:

/legacy/* handled by AngularJS

/app/* handled by Angular

This ensures smooth navigation and stable behavior, while you continue upgrading parts of the application step by step.

Running AngularJS and Angular together may sound complicated, but with the official upgrade tools, it’s actually quite manageable.

This approach offers several key benefits:

No need to rewrite the entire application.

Safe, gradual migration — one module at a time.

Full compatibility with your existing system.

Freedom to create new features using modern Angular.

While this hybrid setup isn’t meant to be permanent, it serves as a practical bridge between old and new technologies, helping you modernize your app step by step without breaking what already works.

Whether you need scalable software solutions, expert IT outsourcing, or a long-term development partner, ISB Vietnam is here to deliver. Let’s build something great together—reach out to us today. Or click here to explore more ISB Vietnam's case studies.

[References]

https://v17.angular.io/guide/upgrade

April 23, 2026

In today’s IT world, we are surrounded by AI talk. Whether you are a developer, a project manager, or a IT translator, understanding these concepts is no longer optional—it is essential. However, the technical jargon can be overwhelming. Let’s break down the most important AI terms into simple, real-world ideas.

To understand how AI is built, imagine a set of Russian Dolls (Matryoshka) where one sits inside the other. (Read more about the AI hierarchy).

The largest, outermost doll is Artificial Intelligence (AI). This is the broad goal of creating machines that can mimic human intelligence. In the early days, this was done using fixed rules. Think of a chess-playing robot from the 90s; it didn't "learn" anything, it just followed a long list of "If-Then" instructions written by a human.

Inside that is the middle doll: Machine Learning (ML). This is a smarter way to reach the goal of AI. Instead of writing every rule, we give the machine a massive amount of data and let it find patterns on its own. A classic example is a Spam Filter. You show the system 10,000 "Spam" emails and 10,000 "Real" emails. The machine eventually notices that spam often contains words like "FREE" or "WINNER" and starts blocking them automatically without being told exactly what to look for.

Finally, the smallest doll at the center is Deep Learning (DL). This is the most advanced type of ML, using "Neural Networks" that act like a human brain to handle very messy data. This technology is what powers the Face-Unlock feature on your phone. To recognize your face—even if you grow a beard, wear glasses, or get older—the AI needs the deep, brain-like power of DL to analyze thousands of tiny details in your features.

Moving to language, we often hear about Large Language Models (LLMs). These are specific types of AI, like ChatGPT or Gemini, that are trained on almost everything written on the internet. You can think of an LLM as a super-advanced "Auto-complete." If you type "The capital of France is...", it predicts the next word is "Paris" simply because it has seen that pattern millions of times before.

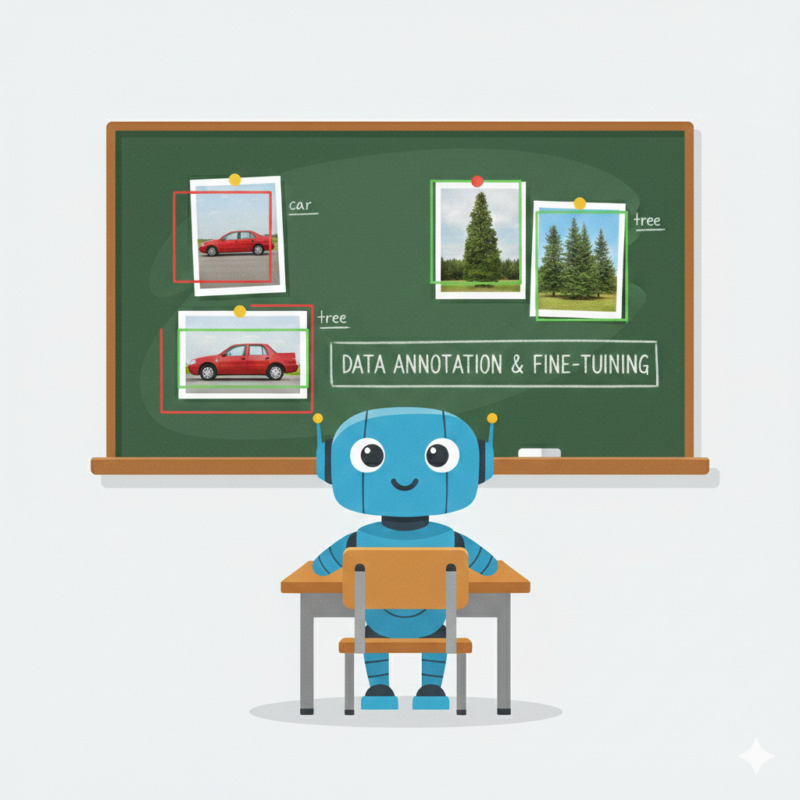

Before an AI can work, it must undergo a rigorous learning process. This starts with Annotation (or Data Labeling), which we can think of as the "Teacher Phase." AI doesn't inherently understand the world; it needs to be told exactly what it’s looking at. Humans, known as Annotators, must look at thousands of data points and "tag" them.

For example, for a Self-Driving Car to function, humans must manually draw boxes around objects in street photos, labeling them as "Tree," "Stop Sign," or "Pedestrian." This provides the AI with "Ground Truth"—the factual foundation it needs to perceive reality. Without this meticulous human help, the AI is essentially blind.

Once an AI has gained general intelligence, we can give it "extra lessons" through a process called Fine-tuning. Instead of building a new model from scratch, we take a pre-trained general AI and show it a specific dataset—like 5,000 legal contracts. Through this specialization, it stops being a general chatbot and evolves into a Legal AI Specialist that masters the complex nuances of law. This approach is highly efficient, saving both time and massive computing costs.

However, the biggest challenge in AI is a problem called Overfitting. This happens when an AI learns the training data too perfectly—it memorizes the specific examples instead of learning the logic.

To understand this, let’s look at a simple example: Teaching an AI to recognize a "Bird."

Imagine you give the AI thousands of photos of birds to study. However, there is a small problem: every bird in your photos is red.

When you test the AI with a photo of a blue bird, the AI will say: "This is NOT a bird" because it isn't red. The AI failed because it didn't learn the "logic" of what a bird is; it only memorized the "color" from your specific photos.

In the IT world, we want our AI to have Generalization—the ability to handle new, unseen situations correctly, rather than just memorizing old data.

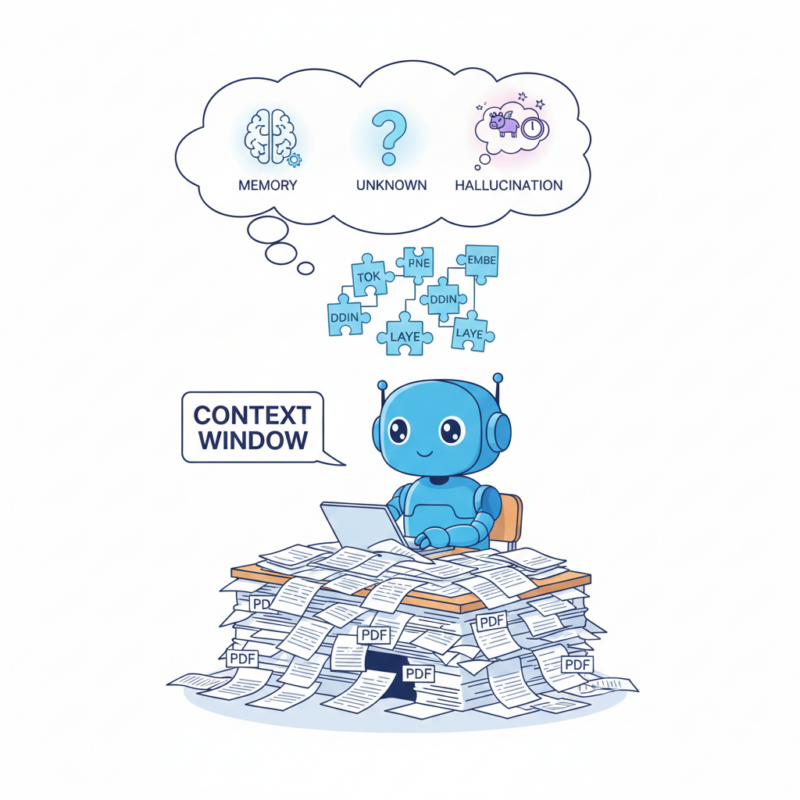

Once we understand how AI learns, we need to look at how it actually "reads" our instructions. While we see words and sentences, the AI sees the world through Tokens. Think of tokens as the "atoms" of language—small fragments that the AI uses to turn our text into numbers it can calculate. A common word like "apple" might be just one token, but a complex one like "terminology" gets chopped into pieces: termin, olo, and gy.

This isn't just a technical detail; it’s the "currency" of AI. Most AI companies charge you based on how many tokens you send in and how many the AI spits out. Interestingly, for us in the IT world, this means language matters. Because of how AI is built, languages like Vietnamese often require more tokens than English to say the same thing, making the "cost" of a conversation slightly different depending on the language you use.

But these tokens don't just cost money; they also take up space. Every AI model works at what I like to call a Context Window—or its short-term memory. Imagine the AI is a brilliant employee sitting at a very small desk. Every PDF you upload, every old message in the chat, and every instruction you give must fit on that desk for the AI to "see" it.

If your conversation gets too long and exceeds the "desk space," the AI has to start throwing the oldest papers into the trash to make room for new ones. This is exactly why, after a long brainstorming session, the AI might suddenly forget your name or the very first rule you set—it simply ran out of room on its desk.

Nowadays, tech giants are racing to build "giant desks," expanding these context windows so AI can "read" an entire book series in one go. But even with a massive memory, AI has a famous flaw: it loves to tell "confident lies," a phenomenon we call Hallucination.

You see, at its heart, an AI is a high-speed probability engine. It doesn't actually check a "truth database" to see if a fact is real. Instead, it asks itself: "Given the words I've seen so far, what is the most likely next word?" If it doesn't have the exact answer, it won't simply say "I don't know" (unless we tell it to). Instead, it will keep predicting the next word to finish the sentence.

This is how you get professional-sounding answers about non-existent coding functions that look perfect but return an undefined error the moment you run them. The AI prioritizes sounding logical and grammatically perfect over being factually true. For those of us working as Developers or PMs, this is the ultimate reminder: never trust numbers or specific names 100%. Always cross-reference and fact-check.

To stop these lies, we use RAG (Retrieval-Augmented Generation). AWS provides a great deep dive into this architecture. Think of this as an "Open Book Exam." Instead of letting the AI guess, a RAG system first searches a reliable source—like your company's handbook—and tells the AI: "Only answer based on this text." We also use Prompt Engineering (giving clear, detailed instructions) and Guardrails (safety fences that block dangerous or wrong answers) to keep the AI on the right track.

Quick Advice for IT Professionals

At ISB Vietnam, we are not just watching the AI revolution—we are leading it. We believe that AI is most powerful when handled by experts who understand its strengths and its "hallucinations." That’s why we are constantly training our developers to master AI tools, ensuring that our software solutions are not just fast, but smart and secure.

Whether you need scalable software solutions, expert IT outsourcing, or a long-term development partner, ISB Vietnam is here to deliver.

Are you a tech talent looking to work in an environment that embraces AI? We are always looking for passionate people to join our team and push the boundaries of what's possible.

Let’s build something great together—reach out to us today!

Image source: Generated by Gemini

April 23, 2026

Memory-mapped files are one of the most powerful features available to Windows C++ developers. At the center of this mechanism is MapViewOfFile, a function that allows you to treat file contents as if they were part of your program’s memory.

In this blog post, we’ll walk through a complete example and explain every handle involved — what it represents, why it exists, and how it fits into the Windows memory model.

Memory-mapped files are a core part of the Windows memory management system. Instead of manually reading file data into buffers, Windows allows you to map a file directly into your process’s virtual memory. The function responsible for this is MapViewOfFile.

In simple terms:

MapViewOfFile lets you treat a file on disk as if it were an array in memory.

Once mapped, you can read (or write) file contents using normal pointer operations — no repeated calls to ReadFile, no manual buffering.

This mechanism is part of the Win32 API and works together with:

Traditional file I/O works like this:

Memory mapping removes extra copying. The operating system:

This makes memory mapping ideal for:

Mapping a file involves three important objects:

Let’s walk through each one conceptually.

We start by calling CreateFile.

HANDLE hFile = CreateFile(...);

hFile is a handle to a file object managed by the Windows kernel. It does not contain the file data. Instead, it represents:

Think of it as your program’s official permission slip to access the file

The file handle tells Windows: “I want to work with this file, and here are my access rights.” Without this handle, you cannot create a file mapping.

CloseHandle(hFile);

Once closed, the program no longer has access to the file.

We start by calling CreateFileMapping.

HANDLE hMapping = CreateFileMapping(hFile, ...);

This handle represents a file mapping object, which is a kernel object describing:

Important:

This still does not map the file into memory. Instead, it creates a blueprint for mapping.

If hFile is the permission slip to the file, hMapping is the architectural plan for how the file will appear in memory.

Windows utilizes a structured approach by separating file access into three distinct layers: the file object, the mapping object (configuration), and the view (the actual memory mapping).

This decoupled architecture provides significant flexibility, enabling developers to create multiple mappings with varying protection levels and facilitate seamless shared memory between processes.

Furthermore, this separation offers granular control over advanced memory management tasks.

CloseHandle(hMapping);

This removes the mapping object from the system.

We start by calling MapViewOfFile.

LPVOID pView = MapViewOfFile(hMapping, ...);

This is where the file actually becomes accessible as memory.

This is not a handle. It is a pointer to virtual memory inside your process. This is where the magic happens.

When you invoke the MapViewOfFile function, Windows performs a sophisticated memory orchestration.

First, it reserves a specific range of address space within your process. It then creates a direct link between this space and the file's data.

Rather than loading the entire file at once, the OS intelligently loads data pages into physical memory only when they are accessed—a process known as 'on-demand paging.'

Consequently, the file ceases to be a distant object on the disk and begins to behave like a standard in-memory array.

You can now do:

char* data = static_cast<char*>(pView);

std::cout << data[0];

No ReadFile, no buffers — just pointer access.

UnmapViewOfFile(pView);

This removes the file from your process’s address space.

Unlike ReadFile, the OS does not immediately load the entire file. Instead, it will do the following actions:

This mechanism is extremely efficient and is one reason memory-mapped files scale well for large datasets.

Each object must be released in reverse order:

It's important to remember that these elements are interconnected. Because the view is tied to the mapping object, and the mapping object is tied to the file handle, they must be released in a specific order. Failing to do so can lead to unexpected crashes or unstable application behavior.

One powerful feature of file mappings:

If multiple processes open the same-named mapping object, they can share memory.

Instead of mapping a disk file, you can even pass INVALID_HANDLE_VALUE to CreateFileMapping to create shared memory backed by the system paging file.

This is a common IPC (Inter-Process Communication) technique in Win32.

MapViewOfFile is not just a function — it’s a gateway into Windows’ virtual memory system.

The process involves:

While it may feel lower-level compared to C++ standard streams, it provides unmatched control and performance.

If you're building performance-critical Windows applications — such as game engines, database systems, or file-processing tools — understanding memory-mapped files will make you a significantly stronger systems developer.

Reference:

https://learn.microsoft.com/en-us/windows/win32/api/memoryapi/nf-memoryapi-mapviewoffile

https://learn.microsoft.com/en-us/windows/win32/memory/file-mapping

Ready to get started?

Contact IVC for a free consultation and discover how we can help your business grow online.

Contact IVC for a Free ConsultationApril 23, 2026

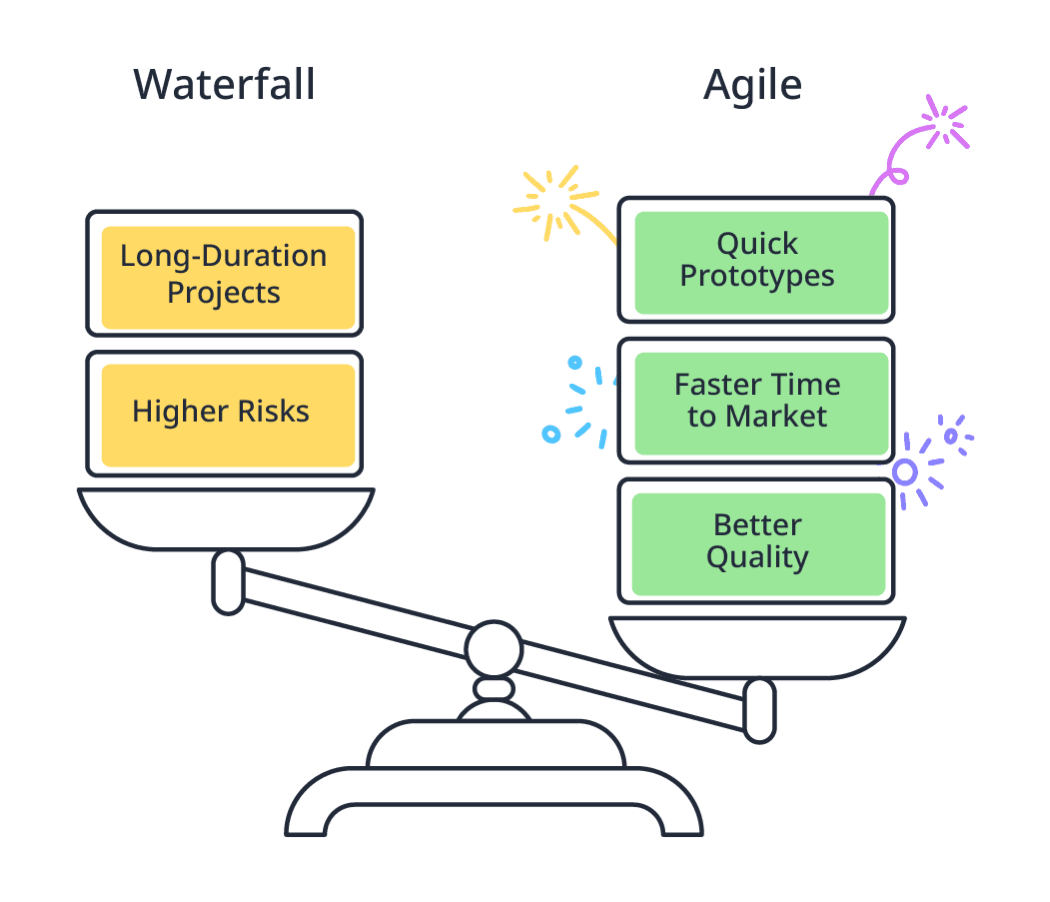

In the development world, Mendix is often discussed as a tool for rapid application building. However, that speed doesn't just come from reducing lines of code; it stems from its perfect integration with the Agile methodology. If you consider Low-code the engine, then Agile is the steering system that keeps the project on track.

source: https://academy.mendix.com/index3.html#/lectures/3136

Many software projects fail not because of poor code, but due to the rigidity of the Waterfall process. In a rapidly changing market, fixing the scope at the very beginning is a massive risk.

Agile in Mendix inverts the traditional project management triangle:

Fixed Resources and Time: You know exactly what you have and how long a Sprint lasts—typically two weeks.

Flexible Scope: Instead of doing everything halfway, the team focuses on completing the most valuable features to deliver a working product after every cycle.

Being Agile is less about the mechanics and more about the Agile Mindset. For a Mendix Developer, this mindset boils down to three principles:

Small and Focused: Breaking work into smaller pieces (User Stories) increases focus and enables quick results.

Feedback is a Gift: It is better to fail early and fix early than to receive negative feedback after the project has ended.

Ownership: In an Agile team, there is no "micro-manager." Every member takes initiative and responsibility for their own tasks.

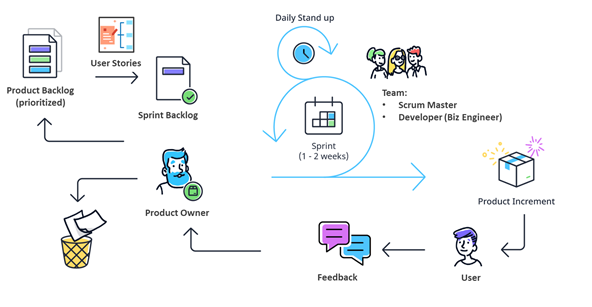

Mendix optimizes development through cross-functional teams:

source:https://academy.mendix.com/link/modules/390/The-Agile-Methodology

Core Team: Consists of the Product Owner (vision manager), Scrum Master (process guardian), and 2-3 Business Engineers (the ones building the app).

Subject Matter Experts (SMEs): Experts in UX/UI, Security, or Integration "fly in" when a Sprint requires deep specialized knowledge and leave once the task is complete.

Mendix doesn't just keep Agile on paper; the platform provides powerful execution tools:

source: https://academy.mendix.com/link/modules/390/The-Agile-Methodology

Epics & User Stories: Manage the backlog and roadmap directly on the Portal using the standard structure: "As a... I want... so that...".

Feedback Widget: This is the direct bridge between users and developers. Feedback is sent straight to the Portal for the PO to evaluate and include in the next Sprint.

Lean Thinking: Leveraging Reusable Components reduces waste and allows the team to focus on creating new value.

To maximize the effectiveness of a Mendix project, you should follow a 5-step roadmap:

Understand the Context: Know exactly why the project needs Agile.

Establish the Mindset: Build trust and transparency within the team.

Define Roles Clearly: Ensure everyone understands their authority and responsibilities.

Sprint 0: Prepare infrastructure, design wireframes, and align goals before coding begins.

Execute and Improve: Build while reflecting to optimize performance continuously.

Conclusion: Developing on Mendix without Agile is like having a supercar but driving it on a road full of potholes. Combine the power of Low-code with the flexibility of Agile to create truly breakthrough products.

Whether you need scalable software solutions, expert IT outsourcing, or a long-term development partner, ISB Vietnam is here to deliver. Let’s build something great together—reach out to us today. Or click here to explore more ISB Vietnam's case studies.

At ISB Vietnam, we are always open to exploring new partnership opportunities.

If you're seeking a reliable, long-term partner who values collaboration and shared growth, we'd be happy to connect and discuss how we can work together.