Have you ever heard of the Japanese word "cushion words"? Today, I would like to introduce some of the politest Japanese words, "cushion words".

1. What is "cushion words"?

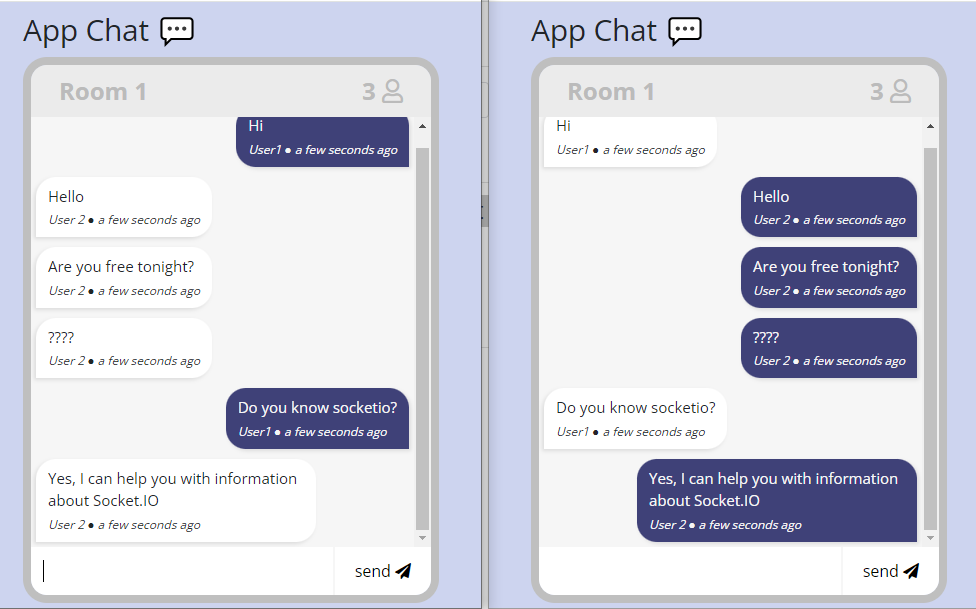

“Cushion words” are also called business pillow words, and by adding them as an introduction to a conversation, they have the effect of softening the impression that the words convey to the other person than if they were said directly. It is just like a “cushion” to soften the impact of words.

You can also express your feelings of “sorry” (申し訳ない) or “I may be a nuisance to the other person, but…” (もしかしたら相手としては迷惑かもしれないけれど…) in a way that shows compassion for the other person.

2.Scenes and effects where "cushion words" should be used

It is mainly used in situations where you want to make a “request”, “refusal”, or “opinion/refutation”. By using a variety of words and using them appropriately, you should be able to express your words in a way that touches the other person’s heart. It also has the effect of making it easier to convey to the other person that “You are an important person who I want to take care of”.

You can use “cushion words” when talking to customers, seniors, superiors, etc.

When making an offer or asking something, when offering something that you don’t know if the other person needs, or when you want to ask something, cushion words such as “If you don’t mind” (もしよろしければ), or “If it’s not a problem” (差し支えなければ) are used. This phrase is useful when you want to extract information from the other party during a business negotiation or meeting, or when making a proposal.

The cushion words are used not only in face-to-face communication, but also in emails and documents where it is difficult to convey nuances and feelings. This will avoid giving the impression that you are bossy or one-sided, and it will show that you are considerate. Cushion words are essential for building trusting relations.

Here are some cases to use cushion words.

a. When asking something.

「お尋ねしてよろしいでしょうか」 (May I ask?)

「失礼ですが」 (Excuse me)

「差し支えなければ」 (If it’s not a problem)

「お教えいただきたいのですが」 (I would like to ask you to tell me.)

Ex:失礼ですが、お名前をフルネームでお聞かせいただけますでしょうか。

b. When requesting something.

「恐縮ですが」 (I’m sorry)

「ご面倒をお掛けしますが」 (I apologize for the inconvenience.)

「ご迷惑とは存じますが」 (I understand this is nuisance.)

「こちらの都合で恐れ入りますが」 (We apologize for the inconvenience.)

Ex:ご面倒をお掛けしますが、お引き受けいただけないでしょうか。

c. When you want cooperation.

「恐れ入りますが」 (Excuse me)

「お手数をおかけしますが」 (I apologize for the inconvenience)

「ご面倒をおかけします」 (I'm sorry for the inconvenience)

「お忙しいところ恐れ入りますが」 (I apologize for the inconvenience, but I'm busy)

Ex:恐れ入りますが、〇日までにメールでお返事をいただいてもよろしいでしょうか。

d. When to refuse.

「あいにく」(Unfortunately)

「残念なのですが」(Unfortunately)

「お気持ちはとてもよくわかるのですが」 (I know exactly how you feel.)

「せっかくのお申し出をいただき、大変ありがたいのですが」 (I would like to thank you very much for your request.)

「ご期待に添えず、大変申し訳ないのですが」 (I am very sorry that I did not meet your expectations.)

「私どもの力不足で、大変恐縮なのですが」 (I am very sorry that I'm lacking in ability.)

Ex: ご希望のデザインには添えず、申し訳ございません。再度、見直しを行います。

e. When giving an opinion or counterargument.

「僭越ながら」 (Please allow me (to say something))

「おっしゃることは重々承知をしておりますが」 (I am fully aware of what you are saying.)

「余計なこととは存じますが」 (I know it's unnecessary, but...)

「私の考え過ぎかもしれませんが」 (Maybe I'm thinking too much)

Ex: おっしゃることは重々承知をしておりますが、今回はA案をご提案させていただけないでしょうか。

f. When a request cannot be met.

「せっかくお声をかけていただいたのですが」 (Thank you for taking time to contact me but)

「ぜひご期待にお応えしたかったのですが」 (I really wanted to meet your expectations but)

「身に余るお話、光栄なのですが」 (It's an honor to hear your story)

「申し上げにくいのですが」 (It's hard to tell)

Ex: 身に余るお話、光栄なのですが、今回は辞退させていただいてもよろしいでしょうか?

g. When you want something to be improved.

「細かいことを言ってしまい恐縮ですが」 (I apologize for mentioning such details)

「こちらの都合ばかりで申し訳ございませんが」 (I apologize for the inconvenience)

「説明が足りず失礼いたしました」 (I apologize for not explaining enough)

Ex: 説明が足りず失礼いたしました。決定の理由を2つ以上あげていただけると助かります。

h. When offering assistance.

「もしよろしければ」 (If you do not mind)

「私でよければ」 (If you're okay with me)

「差し支えなければ」 (If it's not a problem)

「お力になれるのであれば」 (If I can help)

Ex: もしよろしければ〇〇までご提案させていただきます。

3. Examples of NG usage of cushion words that are easy to mistake.

「申し訳ございません」 (I’m sorry) is a cushion word used when you can’t meet someone’s request or when you apologize. Therefore, if you use it casually when it is not needed, it will end up sounding too light in the situation where you originally wanted to use it.

NG example:

「申し訳ございませんが、お名前をお聞かせいただけますか」

「申し訳ございませんが、こちらにご記入いただいてもよろしいでしょうか」

OK example:

「申し訳ございませんが、セール品につきましては返品をご遠慮いただいております」

- 「差し支えなければ」 (If you don’t mind)

「差し支えなければ」 (If you don’t mind) includes the meaning of “Please decline the request if it is inconvenient.” Therefore, it can only be used in situations where there is no inconvenience caused even if the other party refuses. For example, it cannot be used when you are responding to a telephone call and need to know the person’s name to be connected to the agent, so be careful.

NG example:

「差し支えなければ、 お名前を伺ってもよろしいでしょうか」

→「差し支えなければ」を「恐れ入りますが」に変更しましょう。

OK example:

「差し支えなければ資料をご自宅にお送りしましょうか」

4. Precautions when using cushion words

Cushion words are soft expressions that give a polite impression, but if used too often, they can give a dull impression and make it difficult to get the main point across.

Cushion words can be used in a variety of situations, such as emails, chats, telephone calls, and face-to-face meetings, but especially in phone calls and face-to-face situations where sentences cannot be confirmed in text, it is likely that your intentions will be difficult to convey, so avoid using cushion words frequently.

5. How to respond to 「差し支えなけれ」 (If you don’t mind)

So far, we have looked at the expressions 「差し支えなけれ」 (If you don’t mind) used when requesting someone to do something, but there may be times when the other makes a request “If you don’t mind” to us. When accepting a request, a response such as 「承知しました」 (I understand) or 「かしこまりました」 (I understand) is appropriate.

Also, as mentioned above, 「差し支えなけれ」 (If you don’t mind) is an expression that leaves the decision on whether or not to accept the request to you, so of course you can decline it.

When you say “No”, be sure to show that you are sorry.

For example, if you can respond with something like 「大変申し訳ないですが、〇〇があるため、行うことが難しい状況です。お役に立てず恐縮でございます」 (I’m very sorry, but due to 〇〇, I’m unable to help you, I’m sorry that I can’t help you.)

This will leave a good impression.

6. Use cushion words appropriately to facilitate communication.

Cushion words are used in a variety of business situations to show consideration for others and facilitate communication.

It’s good idea to understand multiple phrases and appropriate situations, as they will be useful in situations where you need to convey something that is difficult to convey, such as when declining a request from someone or making a counterargument.

Make sure to use cushion words appropriately depending on the situation and maintain smooth communication and good relationships.

Reference Image and Document Source:

- https://www.pexels.com/

- https://go.chatwork.com/

- https://allabout.co.jp/